Neural network DSP IP from Cadence offers 1TMAC/s computational capacity

Offered as a standalone digital signal processor (DP) for automotive, surveillance, drone and mobile markets, Cadence has released the Tensilica Vision C5 DSP at the Embedded Vision Summit in Santa Clara, California, this week.

It is, says Cadence, the industry’s first standalone, self-contained neural network DSP IP core optimised for vision, radar/lidar and fused-sensor applications, needing high-availability neural network computation.

The Vision C5 DSP offers 1TMAC/s computational capacity to run all neural network computational tasks, used in, for example, automotive and wearable projects.

As neural networks get deeper and more complex, the computational requirements increase. Neural network architectures change regularly as new networks appear and new applications and markets emerge. These trends drive the need for a high-performance, general-purpose, neural network processor for embedded systems that not only requires little power, but also is programmable for future-proofing and lower risk.

Of these new applications, camera-based vision systems in automobiles, drones and security systems require two types of vision-optimised computation. First, the input from the camera is enhanced using traditional computational photography/imaging algorithms. Secondly, neural-network-based recognition algorithms perform object detection and recognition. Existing neural network use hardware accelerators attached to imaging DSPs, with the neural network code split between running some network layers on the DSP and offloading convolutional layers to the accelerator. This is inefficient and consumes unnecessary power, says Cadence.

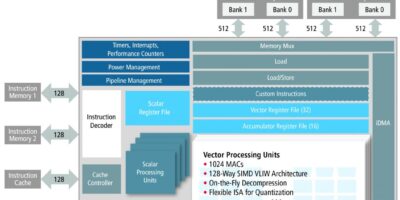

The Vision C5 DSP is architected as a dedicated neural-network-optimised DSP, that accelerates all neural network computational layers (convolution, fully connected, pooling and normalisation), not just the convolution functions. This frees up the main vision/imaging DSP to run image enhancement applications independently, while the Vision C5 DSP runs inference tasks. By eliminating extraneous data movement between the neural network DSP and the main vision/imaging DSP, the Vision C5 DSP has a lower power budget than competing neural network accelerators. It also offers a simple, single-processor programming model for neural networks, adds Cadence.

“The applications for deep learning in real-world devices are tremendous and diverse, and the computational requirements are challenging,” said Jeff Bier, founder of the Embedded Vision Alliance. “Specialised programmable processors like the Vision C5 DSP enable deployment of deep learning in cost- and power-sensitive devices.”

The Vision C5 DSP offers 1TMAC/s computational capacity (4x greater throughput than the Cadence Vision P6 DSP) in less than 1mm² silicon area, for very high computation throughput on deep learning kernels. There are 1024 8-bit multiplier/accumulator (MACs) or 512, 16-bit MACs for eight- and 16-bit resolutions. The very long instruction word (VLIW) single instruction, multiple data (SIMD) architecture has 128-way, 8-bit SIMD or 64-way, 16-bit SIMD. The Vision C5 DSP is architected for multi-core designs, enabling a multi-teraMAC solution in a small footprint, says Cadence. It also has an integrated iDMA and AXI4 interface and uses the same software toolset as the Vision P5 and P6 DSPs

The Vision C5 DSP also comes with the Cadence neural network mapper toolset, which will map any neural network trained with tools such as Caffe and TensorFlow into executable and highly optimized code for the Vision C5 DSP, leveraging a comprehensive set of hand-optimised neural network library functions.

WEARTECHDESIGN.COM – Latest News/Advice on Technology for Wearable Devices

Weartechdesign is unlike any other website currently serving the technology

for wearable devices. We carry the very latest news for design engineers and purchasers.

Register on our mailing list to receive regular updates and offers from

WearTechDesign